If you’re serious about crypto, you eventually hit the same wall: raw blockchain data is everywhere, but getting precise answers is painfully hard. “How many new wallets actually stuck around after using my dApp?” or “Which liquidity providers are quietly exiting?” Suddenly you’re knee‑deep in hex, logs, and weird indexing rules. Advanced query techniques for on-chain data analysis exist exactly to cut through this noise and turn block history into crisp, actionable insight instead of CSV chaos.

Historical context: from block explorers to query engines

Early on, everyone treated chains like glorified ledgers. You had block explorers, a few APIs, and maybe some hacked-together scripts. Queries were shallow: balances, transfers, token holders. As DeFi and NFTs exploded, that stopped being enough. Teams needed behavioral questions answered: cohort retention, protocol revenue, risk exposure across chains. That’s when on-chain data analytics tools started to look less like explorers and more like full-scale data warehouses. Indexers, ETL pipelines, columnar storage, and SQL layers appeared on top of nodes. Over time, analytics vendors moved from static snapshots to streaming architectures, making it possible to ask not just “what happened?” but “what’s unfolding right now?” on production-grade infrastructure.

At the same time, power shifted from core devs to analysts and product people. You no longer had to be the engineer running an archive node to ask sophisticated questions. Instead, advanced query stacks wrapped RPC, parsing, and decoding logic into a service. This freed analysts to experiment with complex joins, window functions, and labeling strategies, while dashboards turned into living control panels rather than monthly reports.

Why this history matters for your day‑to‑day work

If you still approach questions like “Who are my whales?” with explorer exports and spreadsheets, you’re using 2018 tactics in a 2025 environment. Knowing how the stack evolved explains why certain shortcuts (like relying only on transfers) miss half the story.

Базовые принципы продвинутых on-chain запросов

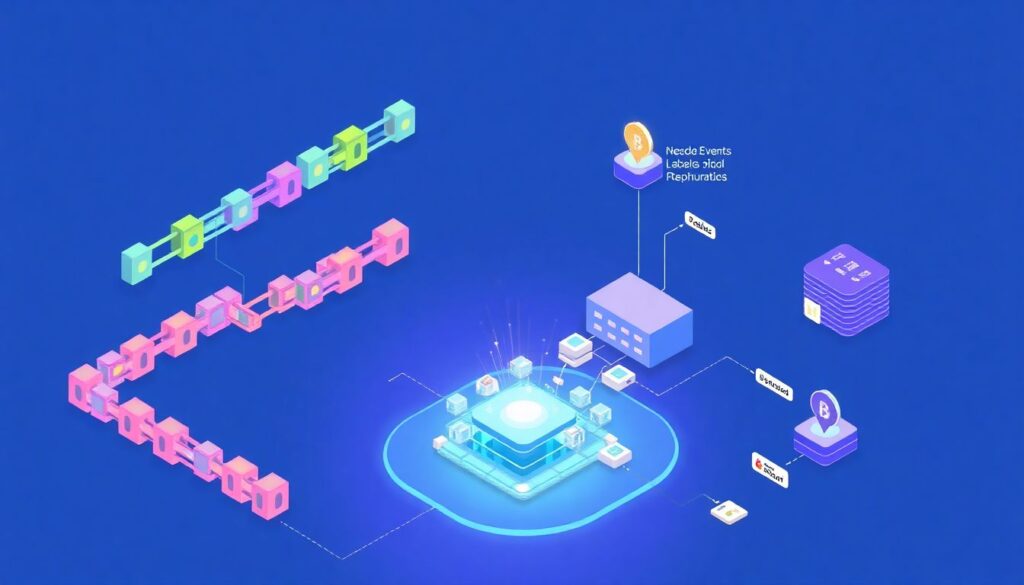

Let’s strip the buzzwords. Advanced blockchain data query solutions all rest on a few core ideas: normalized schemas, decoded events, and reproducible labels. Normalization means every chain, token, and wallet lands in a coherent data model so you can write one query that runs across multiple networks. Decoding turns raw logs and calldata into human‑readable actions like “swap”, “borrow”, or “mint”. Labels then attach semantics to addresses: contracts vs EOAs, bots vs humans, CEX hot wallets vs long‑term holders. Once these layers exist, you stop querying “logs” and start querying “behaviors”. Add robust time handling, deduplication, and chain reorg logic, and your metrics become something you can actually trust in a board meeting.

Two principles quietly separate amateurs from professionals. First, query idempotency: running the same logic tomorrow should yield the same historical numbers, even as new blocks appear. Second, explicit assumptions: your query should encode what “user”, “active”, and “volume” mean rather than hiding them in verbal definitions.

Working with schemas instead of raw nodes

Talking directly to nodes is fine for engineering checks, but sustained analysis belongs on a proper blockchain data analytics platform that exposes curated tables for transactions, traces, events, and decoded protocol actions. You focus on logic; the platform handles indexing headaches.

Практические техники: как строить запросы, которые действительно помогают

Start with entity modeling. Before opening your favorite real-time on-chain data analysis platform, define exactly what your “unit” is: wallet, user, position, trade, pool, or protocol. For something like a DEX, positions might be more relevant than wallets, because one wallet can hold multiple LP positions with different risk levels. Then, build derived tables that encode this entity once, instead of recomputing joins in every dashboard. For instance, a “user_sessions” table could map each wallet to logical sessions based on gaps in activity, letting you measure stickiness with simple aggregations. This is where on-chain analytics software for crypto gets fun: you layer behavioral features (first_tx_time, last_active_day, protocol_count, chain_count) and then segment users like a growth team would on a Web2 product.

From there, window functions become your best friend. Rolling 7‑day volume, 30‑day retention, and “time to second action” all depend on analytic functions like ROW_NUMBER, LAG, and SUM OVER(PARTITION BY …). Instead of exporting data to Python for everything, push as much logic into SQL as possible; it’s easier to audit and share.

User funnels and cohorts on-chain

A simple but powerful pattern: treat every distinct combination of wallet + protocol as a “user-protocol journey”. Track step 1 as “first interaction”, step 2 as “second interaction within N days”, and so on. Suddenly you have genuine funnels and cohorts, not just aggregate counts.

Cross‑protocol and cross‑chain analysis

Today’s user rarely lives on a single app or chain. To measure real behavior, you need joins across datasets that historically lived in different silos. Good on-chain data analytics tools expose unified address and token references so you can follow wallets as they bridge, swap, lend, and farm on different ecosystems. Practically, this means building an address dimension table that merges chain‑specific formats into a chain‑agnostic key, and adding protocol‑level identifiers to interactions. With that, a single query can answer: “Which users who bridged from chain A to chain B actually opened a position in protocol X within 72 hours?” or “What share of our volume comes from wallets that also used a particular competitor over the last month?”

When you design these joins, be explicit about time windows and directionality. “After bridging” usually means “within N blocks or hours after bridge confirmation”, not “ever after”. Encoding this carefully avoids misleading attribution claims.

Detecting patterns and anomalies in practice

Anomaly detection doesn’t require fancy ML at first. Baseline user or volume behavior by hour and day of week, then compare current metrics with Z‑scores or simple percentage deviations straight in SQL. You’ll quickly see wash trading bursts, inorganic airdrop farming, and sudden liquidity exits.

Примеры реализации с упором на практику

Imagine you’re building an internal analytics stack on top of a commercial blockchain data analytics platform. Step one: define curated views. Create “fact” views for swaps, transfers, liquidations, and mints across the protocols you care about. Each view should expose standardized columns: tx_hash, block_time, wallet, counterparty, token_in, token_out, usd_value. Step two: add dimension tables for addresses, tokens, and protocols, including labels such as “dex”, “lending”, “CEX_deposit”, “market_maker”. Step three: wire your BI tool directly to this schema and lock your logic into version‑controlled SQL files. That’s how you move from ad‑hoc querying to a stable “source of truth” layer. Over time, you can automate daily materializations so heavy joins run once, not on every dashboard refresh.

At that point, deploying a new metric is no longer a multi‑day fire drill. A data person writes or modifies a query; the pipeline updates; stakeholders see it in dashboards without changing their workflows.

Using off‑the‑shelf platforms without losing rigor

If you rely on an external on-chain analytics software for crypto, don’t treat it as a black box. Read the schema docs, inspect how popular community queries define key metrics, and fork them instead of starting from scratch.

DeFi risk and treasury monitoring

Treasure teams and risk desks live on streaming data. A robust real-time on-chain data analysis platform lets you define SQL‑based alerts: “Notify when protocol TVL drops 5% in 30 minutes” or “Flag any wallet transferring more than X% of our treasury to a new address.” In practice, this means combining event tables with price feeds and label dimensions, then materializing “risk_views” that contain only the features your monitors care about.

Частые заблуждения и как их обходить

A few myths consistently derail teams. First: “The chain doesn’t lie, so my numbers must be correct.” The chain stores facts, but your interpretation can be wildly off. Mis‑decoded events, double‑counted internal calls, and ignoring failed transactions can distort metrics by double digits. Second: “I just need a good dashboard template.” Without a solid metric definition layer, dashboards become pretty but misleading. Third: “Running my own node is enough.” Nodes give raw access, not understanding; you still need indexing, enrichment, and aggregation logic. Finally, many people believe every change requires AI. In reality, most value comes from domain‑informed queries and disciplined data modeling on top of advanced blockchain data query solutions, not from black‑box models.

Another subtle misconception: thinking one query works forever. Protocol upgrades, contract migrations, and token wrappers constantly change how actions are recorded. Treat your analytics like code: version it, review it, and refactor it as the ecosystem evolves. This mindset keeps your insights trustworthy over the long haul.