Why scraping and normalizing crypto data hurts more than it should

If you’ve ever tried to collect crypto prices from a few exchanges and suddenly found yourself with ten formats, missing fields, random nulls and timestamps from alternate universes, you already know the problem. Scraping and normalizing crypto data at scale isn’t just “write a script and cron it”. It’s a pipeline problem: unstable HTML, inconsistent APIs, rate limits, symbol chaos (BTCUSDT vs XBT-USD), and subtle rounding differences. If you don’t design with those in mind from day one, your backtests lie, your dashboards drift, and your alerts trigger at the worst possible moment. Let’s walk through a practical, end‑to‑end approach that doesn’t pretend everything is clean and friendly, but actually handles the messy parts.

At a high level you want three things: predictable ingestion, strict normalization, and reliable delivery to storage and downstream apps. That means designing your tutorial-style workflow like a real data product, not a weekend script. We’ll talk about choosing sources, dealing with formats, building a normalization layer, and hardening the whole thing for scale and failure.

Step 1: Decide what “good data” means for your use case

Before picking crypto data scraping tools or writing any code, you need a definition of “good enough”. Are you building a quant strategy that needs tick‑level data, or a monitoring dashboard that’s okay with 1‑minute candles? Do you care more about breadth (hundreds of altcoins) or reliability on a short list of majors? If you don’t lock this down, you’ll waste time overscraping low‑value sources or under‑collecting where accuracy matters. Formulate concrete SLAs for latency (e.g., sub‑second vs 10 seconds), completeness (percentage of trades captured), and acceptable gaps. This definition will later drive how aggressively you retry, how you handle outages and what you store long term.

If everything is “critical”, nothing is. Mark symbols and venues by priority tiers and accept that some corners of the cryptoverse are permanently noisy.

- Tier A: majors on top exchanges, strict latency and uptime targets

- Tier B: liquid alts, looser latency, still monitored

- Tier C: fringe tokens, best‑effort only

Choosing sources: APIs first, HTML last

Modern exchanges mostly have decent REST and WebSocket endpoints, plus a cryptocurrency market data api ecosystem around them. Scraping HTML pages should be your last resort because layouts change often and silently. Prefer official exchange APIs, then reputable aggregators, then HTML only when nothing else exists. For each source, document: rate limits, supported symbols, time precision, and historical depth. That documentation will save you when something breaks at 3 a.m. and you have to decide whether it’s a bug, a data gap, or a new limitation.

A quick sanity rule: if an exchange doesn’t publish stable docs or status pages, don’t rely on it for core signals.

- Check if websockets provide trades/order book; use REST for backfills

- Verify if they return server time to align clocks

- Test error behavior: HTTP codes, throttling, empty payloads

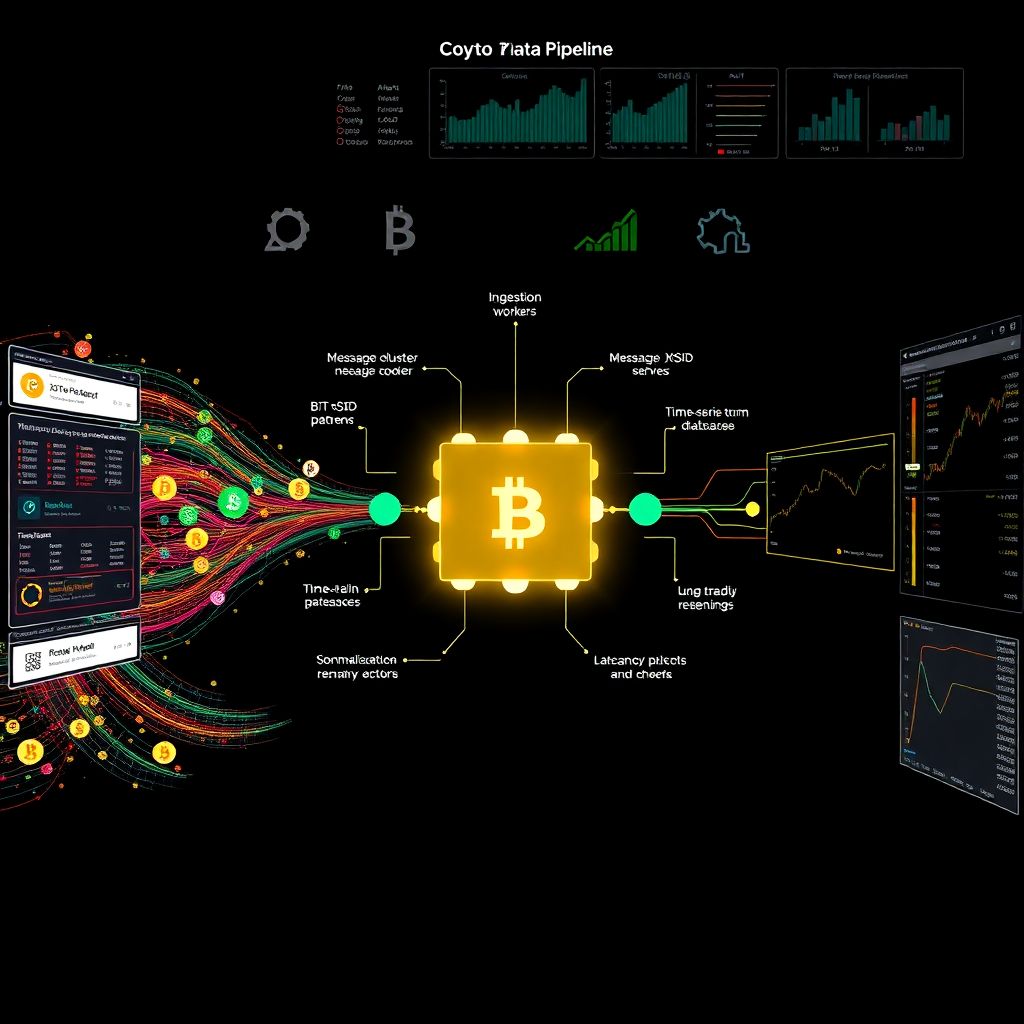

Step 2: Pick a stack built for streaming, not just scripts

For scraping at scale, you want components that separate concerns: ingestion workers, a message bus, a normalization service, and storage. Even if you start small, structuring it this way avoids rewriting everything when you grow. A simple, pragmatic setup looks like this: lightweight Python or Node workers connect to sources, push raw messages into Kafka or Redis Streams, a separate service reads from the stream, normalizes and enriches events, then writes them into a time‑series database and object storage for long‑term archives. This pipeline lets you reprocess from raw data whenever you upgrade your logic, instead of trying to fix everything in place.

The main principle: never couple source‑specific code to downstream consumers. Your trading bot or analytics dashboard should never care which exchange produced a message, only that the unified schema is valid.

- Use Docker to package workers so you can scale them per exchange

- Keep config (keys, endpoints, symbols) outside code in files or vaults

- Log both raw payload size and normalized event counts for audits

When to use ready‑made tools vs building your own

You don’t have to reinvent every wheel. Some crypto data aggregation software already provides robust connectors to multiple exchanges, with retries, symbol mapping and basic normalization. These platforms can shortcut the painful early phase, especially if your focus is research rather than infrastructure. The trade‑off: you lose some control over edge cases and may be tied to their schema. If your team is small or primarily quantitative, starting with a managed pipeline and then adding custom scrapers for niche venues often makes more sense than building an entire platform from scratch.

On the other hand, if you care about microsecond latencies, exotic instruments, or proprietary normalization logic, you’ll still end up writing your own ingestion and mapping layer sooner or later.

- Prototype with managed tools; migrate critical paths to custom code

- Keep your internal schema stable even if vendors change theirs

- Avoid deep lock‑in: be able to swap vendors without rewriting clients

Step 3: Implement robust scraping logic

At scale, failures are the norm, not the exception. Your scraping logic must treat “unreliable network” as the default state. That means exponential backoff with jitter, circuit breakers per exchange, and strict separation between transient errors and fatal misconfigurations. For WebSockets, track last seen sequence or trade ID so you can detect gaps and trigger backfills via REST. For REST polling, avoid synchronized bursts: randomize start offsets per worker to stay under rate limits. Always record metadata with each batch—source, endpoint, latency, any throttling headers—so you can later explain why a particular candle has fewer trades than expected.

Also, avoid turning network problems into data issues. If you miss a window due to downtime, mark the gap explicitly instead of silently stretching nearby data to fill it. Silent “fixes” at the scraping layer are the reason many backtests look amazing and then collapse in production.

- Limit max retries to avoid endless loops on bad credentials

- Tag every event with a source ID and ingestion timestamp

- Persist raw bytes or JSON blobs before transformation

Working with HTML when you must

Sometimes there is no API, only a flashy trading page and your resolve. When you scrape HTML, assume it will break frequently. Use CSS selectors or XPath that rely on structural patterns, not brittle text labels. Build parsers that validate every field: if a price doesn’t parse as a float, discard that row and log the issue rather than forcing a parse. Keep a versioned test suite with real HTML snapshots, so a layout change shows up as broken tests instead of corrupt time series. HTML scraping should live in its own module, feeding the same raw event format as APIs, so the normalization and storage layers remain unchanged.

In short, isolate the chaos: everything ugly happens as close to the source as possible, with strict checks before data enters your trusted pipeline.

- Throttle HTML scrapers more aggressively to avoid bans

- Rotate user agents and respect robots and TOS

- Alert when HTML payload structure deviates from known patterns

Step 4: Design your normalization schema

Normalization is where you turn vendor‑specific noise into a consistent language. Start with a minimal but strict core schema: unified symbol, base/quote, venue, side, price, size, trade ID, event time, source time, ingestion time. Define each field precisely, including units and rounding rules. Don’t overfit to any single exchange’s quirks; instead, design a vendor‑agnostic view and maintain detailed mapping rules per source. For example, some venues send size in quote currency, others in base. Normalize to one convention and keep the original in an “extensions” field so you can debug discrepancies later.

Another crucial decision is how you treat timestamps. Use UTC everywhere and keep both “event_time” from the exchange and “receive_time” from your system. This distinction lets you detect source lag and separate market delays from infrastructure issues.

- Keep enums (e.g., “BUY”/“SELL”) normalized and documented

- Store raw symbol plus normalized symbol mapping for every event

- Version your schema so downstream users know what they’re reading

Handling symbol mapping across exchanges

Symbol chaos is where a lot of pipelines quietly fail. BTC‑USD, XBTUSD, BTCUSDT, and weird variants like BTC‑PERP all coexist. Build a dedicated mapping layer that translates every vendor symbol into a canonical instrument ID. This layer should be data‑driven, not hard‑coded: think configuration files or a small mapping service that supports audit history. When an exchange lists a new pair, you add an entry; when they rename or delist, you update the mapping while preserving historical links. Your strategy logic should talk exclusively in canonical IDs, never raw exchange symbols, which prevents subtle bugs when two venues share similar but distinct tickers.

For derivatives, you’ll also need metadata like expiry, contract size, and margin type. Put these in a separate instrument catalog that your normalization process references, so you can update properties without rewriting the past.

- Maintain a “conflicts” list where symbols are reused with different meaning

- Expose mapping via an internal API for consistency across services

- Log unknown symbols as warnings, never silently guess

Step 5: Time series and storage strategy

Once normalized, you need to decide what to store and where. Raw trades, book snapshots, or just candles? A common pattern is: raw events in cheap object storage for reprocessing, plus curated aggregates in a time‑series database for fast queries. Choose a database that can handle high write throughput, long retention, and flexible tags for symbol, venue, and data type. Partitioning by time and symbol helps keep queries predictable. Plan retention policies explicitly: maybe you keep tick data for 6 months, 1‑second bars for 2 years, and longer‑horizon aggregates even longer. Without explicit policies, disks fill up with data nobody uses and your cluster slowly suffocates.

Compression and encoding matter too. Storing JSON forever is convenient but expensive; consider columnar or binary formats for archival buckets, with tooling to regenerate your “hot” database if needed.

- Separate production and research clusters to protect live workloads

- Export regular snapshots of key datasets for offline experiments

- Document which datasets are authoritative for each use case

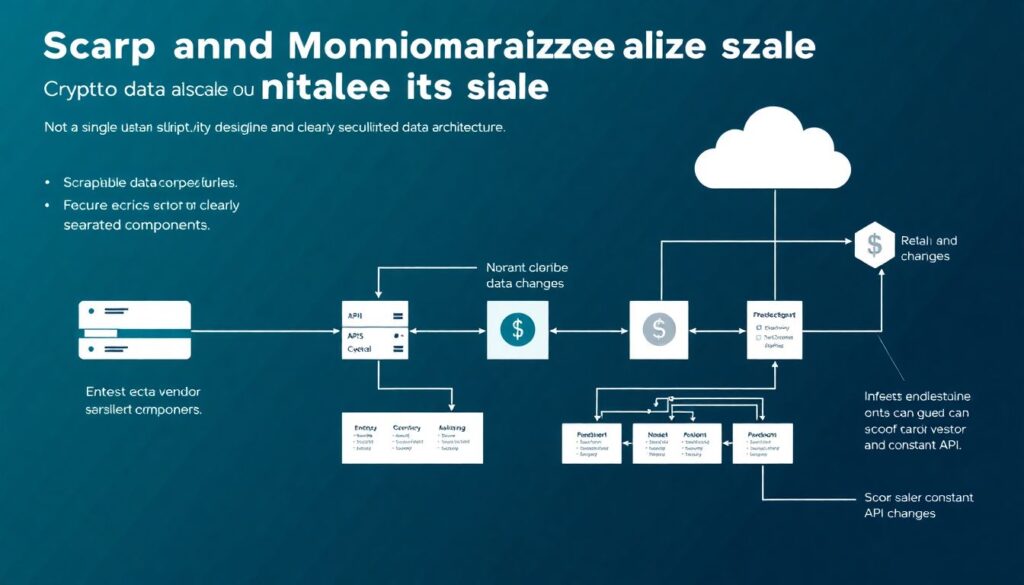

Enterprise‑grade feeds vs DIY

At some point you may be tempted to replace parts of your stack with a managed crypto price data feed enterprise solution. These vendors aggregate dozens of exchanges, normalize symbols, and stream data over stable connections with SLAs. The key is to integrate them as another source, not the only source. Treat them as a high‑quality, low‑maintenance input that still flows through your normalization and monitoring layers. This way, if you later switch vendors or add direct exchange connections for latency‑sensitive flows, your internal consumers don’t notice the change.

Think of managed feeds as outsourced plumbing for the boring but critical parts, while you focus on logic, monitoring and integration with your own systems.

- Validate vendor data against a few direct sources to detect anomalies

- Avoid mixing vendor timestamps with your own without clear rules

- Track costs by data type and symbol group to avoid surprises

Step 6: Working with historical data at scale

Live scraping is only half the story; most real work relies on the past. A reliable historical crypto market data provider can save months of effort, especially if you need multi‑year depth across dozens of venues. The challenges: aligning their schema with yours, detecting gaps, and merging vendor history with your own collected data. Start by importing a limited set of symbols and check: volume consistency, timestamp ordering, and structural differences. Build migration scripts that convert external history into your canonical format, including your symbol IDs and event types. Keep the original vendor data in a “raw_import” bucket so you can re‑ingest if your schema evolves.

Once aligned, you can use this historical layer to backfill early periods where your scraper didn’t exist, or to sanity‑check your own coverage when exchanges had partial outages.

- Annotate imported periods so analysts know which provider they came from

- Run spot checks against exchange archives when available

- Automate reimports when your schema or logic changes

APIs for internal and external consumers

After you’ve done the hard work of scraping and normalizing, expose it via a clean internal cryptocurrency market data api. Treat your own team as clients: clear endpoints for trades, candles, order books, and reference data, with explicit rate limits and query patterns. This prevents every team from writing its own ad‑hoc queries directly against the database, which eventually kills performance and introduces inconsistent logic. If you ever decide to commercialize access to your dataset, having that API boundary already in place makes the transition much easier.

Even if the API is “internal only”, secure it properly, keep versioned documentation, and avoid breaking existing consumers without deprecation windows.

- Offer both REST for pull and WebSocket or SSE for real‑time streams

- Return consistent error codes and structured error details

- Log usage per client to detect abusive or inefficient patterns

Step 7: Monitoring, quality checks, and alerts

Without monitoring, even the cleanest design will quietly degrade. Build metrics focused on data quality, not just system health. Useful ones include: events per symbol per time bucket, ratio of empty to non‑empty responses per source, average and max latency between event_time and ingestion_time, and count of schema validation failures. When any of these shift beyond a baseline, trigger alerts that are specific, not vague. “BTC‑USDT trades from Exchange X dropped 80% in the last 5 minutes” is actionable; “something is wrong” is not. Combine automated checks with spot audits—periodically compare your aggregates against trusted third‑party sources.

Over time, these quality signals become more important than CPU or memory graphs, because they directly represent the value your pipeline delivers.

- Dashboards per venue and per key symbol help isolate issues fast

- Add synthetic tests: expected trades at known active times

- Include data quality status in your internal APIs

Scaling strategy: horizontal first, clever later

When load grows, your best ally is horizontal scaling. Because you separated ingestion, normalization, and storage, you can add more workers without touching core logic. Partition workloads by exchange, symbol set, or time slices, and let an orchestration system like Kubernetes handle placement and restarts. Only after you’ve exhausted simple concurrency and partitioning should you chase micro‑optimizations. Premature cleverness often introduces subtle bugs in ordering or aggregation that are hard to spot but costly in trading scenarios.

Always benchmark with realistic data rates and venue behaviors; synthetic “ideal” traffic hides the very failure modes that hit you in production.

- Scale write paths and read paths independently

- Stress‑test backfills; they’re often more demanding than live flows

- Keep one environment where you can safely replay whole days of data

Bringing it all together

Scraping and normalizing crypto data at scale is less about a single clever script and more about disciplined architecture. You pick reliable sources where possible, design a resilient pipeline with clear separation of roles, and enforce a strict schema that survives vendor quirks and API churn. You combine bespoke collectors with crypto data scraping tools and managed services where they make sense, always flowing everything through your own normalization and monitoring layers. Over time, this gives you an asset far more valuable than any single strategy: a trustworthy, explainable record of market behavior you can build on, experiment with, and confidently expose to others.

The payoff is simple but powerful: when your data story is coherent from raw tick to final chart, every new idea—whether it’s a trading signal, risk metric, or analytics product—ships faster, fails more honestly, and scales without rewriting the foundations.